In synchronous systems, we often assume a request is processed “exactly once.” In asynchronous pipelines, that assumption is rarely realistic.

In production, duplicate jobs can occur for reasons that are entirely normal:

- A worker crashes after committing to the database but before acknowledging the message

- Network timeouts trigger retries upstream or at the broker level

- A scheduler/producer emits duplicates due to race conditions

- A consumer restarts/rebalances and reprocesses messages

- Kubernetes kills a pod mid-execution

For that reason, the right question in async pipelines is not:

“How do we ensure it never runs twice?”

but rather:

“If it runs again, will the system still converge to the correct final state?”

That is the essence of idempotency.

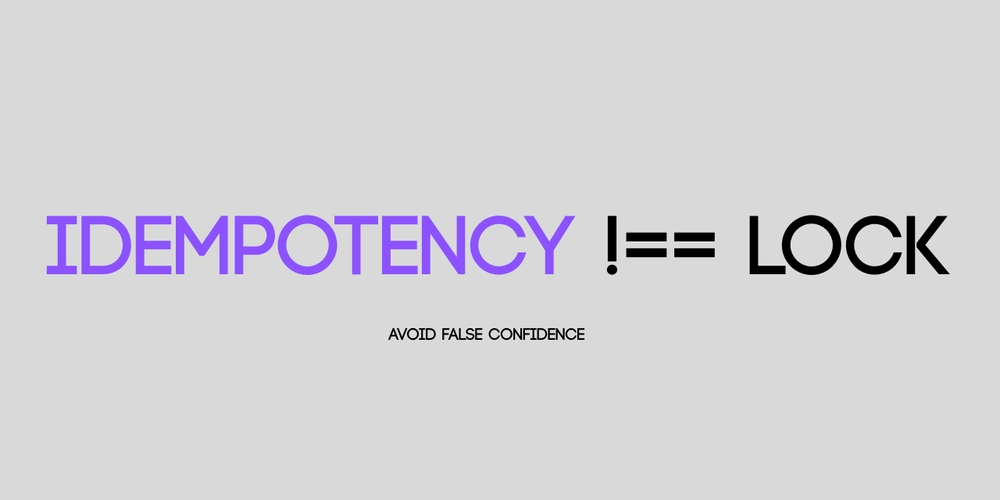

1) Idempotency is not “preventing re-runs”

Some systems try to “prevent re-runs” by adding locks, reducing retries, or increasing timeouts. This often makes failures harder to observe, and when problems do surface, they tend to be more costly.

A more robust approach is to design for correctness:

An idempotent handler may run multiple times, but the final system state remains unchanged—or still correct according to the intended outcome.

2) Three real-world scenarios (and the corresponding idempotency strategies)

Case 1 — Notifications/push: duplicates do not corrupt data, but degrade trust

Context

An event is published after a user action (placing an order, creating a reminder, changing a status…), and a worker calls FCM/OneSignal to send a push notification.

How duplicates happen

- The provider API call succeeds

- The request times out, so the worker assumes failure and retries

- The retry sends the same notification again

Appropriate approach (soft idempotency)

You may not need strict “exactly once,” but you should aim for at-most-once per intent.

A practical design:

- Generate a

notification_intent_id(e.g.,user_id + action_id + template_id) - Store intent state:

PENDING → SENT - The worker sends only if the intent is not already

SENT

Trade-off

You introduce a small persistence layer (a notifications table or an outbox-like store). In return:

- Retries become safe

- You gain auditability

- You avoid duplicate notifications

Case 2 — Points/wallet adjustments: duplicates can produce severe inconsistencies

Context

A worker processes events that adjust points or balances, typically:

- writing a transaction record

- updating the balance

How duplicates happen

- The worker commits the balance update

- It crashes before acknowledging the message

- The broker re-delivers the message

- The worker processes it again, applying the adjustment twice

Appropriate approach (hard idempotency)

For high-risk operations (points, money, inventory), a robust pattern is:

- Treat a ledger as the source of truth

- Treat the balance as a derived state (or updated consistently based on ledger rules)

A typical design:

- Assign each adjustment a

transaction_id(idempotency key) - Insert a ledger entry with a unique constraint on

transaction_id - If the insert conflicts, the adjustment has already been applied

- Update the balance based on ledger logic (depending on the system model)

Key point

Avoid making “balance” a state that can be double-written.

Instead, ensure the ledger entry is immutable and cannot be duplicated.

Trade-off

Ledger-based design adds complexity and storage costs, but it buys:

- Clear correctness guarantees

- Strong audit trails

- Easier reconciliation/backfill

Case 3 — Invoice creation + workflow state: duplicates cause workflow inconsistency and stuck states

Context

A pipeline performs:

- create an invoice

- update order status (e.g.,

INVOICED) - emit the next event

How duplicates happen

- The worker creates the invoice

- It crashes before updating the order status

-

A retry runs:

-

invoice creation is skipped because the invoice already exists

- but the order status remains incorrect, so downstream processing gets stuck

Appropriate approach (state-driven idempotency)

You typically need two layers:

- Natural idempotency (ensure-exists semantics)

- Make the invoice unique by

order_id - Reframe “create invoice” as “ensure invoice exists for this order”

- Workflow guard / state machine

- Define valid state transitions explicitly

- If status is already

INVOICED, skip - Otherwise, apply the missing transition to converge the workflow state

Trade-off

This requires a more disciplined definition of workflow states, but in return:

- Retries become safe

- Replay/backfill becomes less risky

3) Three levels of idempotency (to avoid conflating concerns)

- Handler-level dedupe (e.g.,

event_id/processed_events): useful, but limited under replay/backfill/out-of-order scenarios - State-level idempotency (ensure-state / upsert semantics): effective for workflows and state transitions

- Ledger-level idempotency (unique transaction entries): essential for money/points/inventory-like operations

A common mistake is applying a single mechanism everywhere. In practice, the required level should match the risk profile.

4) Common misconceptions

-

Redis locks do not replace idempotency

Locks primarily prevent concurrent execution; they do not guarantee correctness under re-execution. -

Correctness should not depend on transport guarantees

Kafka/SQS/RabbitMQ can still deliver duplicates when consumers crash or acknowledgements fail. -

A processed-events table is insufficient if state design is weak

Especially when replay/backfill or out-of-order delivery becomes part of normal operations.

Conclusion

In async pipelines, retries and duplicates should be treated as expected behavior. The key is to design such that:

Re-running the same logical work still converges to the correct final state.

When you focus on “the correct state to reach” rather than “the action to perform,” idempotency becomes a natural part of system design—not a patch added late in the process.